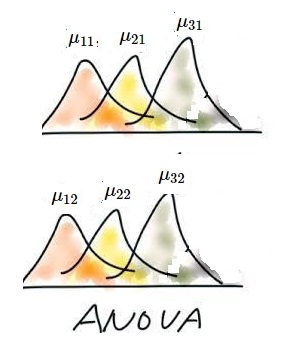

For example, consider sa follows.

| $(\sharp_{11})$ | A data: $x_{111}, x_{112}, x_{113},..., x_{11n}$ is obtained from the normal distribution $N(\mu_{11}, \sigma)$ |

| $(\sharp_{21})$ | A data: $x_{211}, x_{212}, x_{213},..., x_{21n}$ is obtained from the normal distribution $N(\mu_{21}, \sigma)$ |

| $(\sharp_{31})$ | A data: $x_{311}, x_{312}, x_{313},..., x_{31n}$ is obtained from the normal distribution $N(\mu_{31}, \sigma)$ |

| $(\sharp_{12})$ | A data: $x_{121}, x_{122}, x_{123},..., x_{12n}$ is obtained from the normal distribution $N(\mu_{12}, \sigma)$ |

| $(\sharp_{22})$ | A data: $x_{221}, x_{222}, x_{223},..., x_{22n}$ is obtained from the normal distribution $N(\mu_{22}, \sigma)$ |

| $(\sharp_{32})$ | A data: $x_{321}, x_{322}, x_{323},..., x_{32n}$ is obtained from the normal distribution $N(\mu_{32}, \sigma)$ |

How should we answer it?

$\S$7.3.1: Preparation

As one of generalizations of the simultaneous normal observable (7.14), we consider a kind of observable ${\mathsf O}_G^{abn} = (X(\equiv {\mathbb R}^{abn}), {\mathcal B}_{\mathbb R}^{abn}, {{{G}}^{abn}} )$ in $L^\infty (\Omega ( \equiv ({\mathbb R}^{ab} \times {\mathbb R}_+))$.

\begin{align} & [{{{G}}}^{abn} (\widehat{\Xi})] (\omega) \nonumber \\ = & \frac{1} {({ {\sqrt{2 \pi } \sigma} })^{abn}} \underset{ \widehat{\Xi} } {\int \cdots \int} \exp[- \frac{ \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} - \mu_{ij} )^2 }{2 \sigma^2} ] \times_{k=1}^n \times_{j=1}^b \times_{i=1}^a d{x_{ijk} } \nonumber \\ & \qquad ( \forall \omega =((\mu_{ij})_{i=1,2, \ldots, a,j=1,2, \ldots, b}, \sigma) \in \Omega = {\mathbb R}^{ab} \times {\mathbb R}_+ , \widehat{\Xi} \in {\mathcal B}_{\mathbb R}^{abn} ) \tag{7.31} \end{align}Therefore, consider the parallel simultaneous normal measurement:

\begin{align} {\mathsf M}_{L^\infty ({\mathbb R}^{ab} \times {\mathbb R}_+ )} ( {\mathsf O}_G^{{{abn}}} = (X(\equiv {\mathbb R}^{{{abn}}}), {\mathcal B}_{\mathbb R}^{{{abn}}}, {{{G}}^{{{abn}}}} ), S_{[(\mu=(\mu_{ij}\;|\; i=1,2,\cdots, a, j=1,2,\cdots,b ), \sigma )]} ) \end{align}Here, \begin{align} \mu_{ij} & = \overline{\mu} (= \mu_{\bullet \bullet } = \frac{\sum_{i=1}^a \sum_{j=1}^b \mu_{ij} }{ab}) \nonumber \\ & \quad + \alpha_i (= \mu_{i \bullet} - \mu_{\bullet \bullet } = \frac{\sum_{j=1}^b \mu_{ij} }{b} - \frac{\sum_{i=1}^a \sum_{j=1}^b \mu_{ij} }{ab} ) \nonumber \\ & \quad + \beta_j (=\mu_{\bullet j } - \mu_{\bullet \bullet } = \frac{\sum_{i=1}^a \mu_{ij} }{a} - \frac{\sum_{i=1}^a \sum_{j=1}^b \mu_{ij} }{ab} ) \nonumber \\ & \quad + {(\alpha \beta)}_{ij} (=\mu_{ij} -\mu_{i \bullet}-\mu_{ \bullet j}+ \mu_{\bullet \bullet } ) \tag{7.32} \end{align}

And put,

\begin{align} &X={\mathbb R}^{abn} \ni x = (x_{ijk})_{i=1,2,\ldots,a,\; j=1,2,\ldots,b, \; k=1,2, \ldots, n} \nonumber \\ & x_{ij\bullet}= \frac{\sum_{k=1}^{n}x_{ijk}}{n}, \quad x_{i \bullet \bullet} =\frac{\sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{bn}, \quad x_{ \bullet j \bullet} =\frac{\sum_{i=1}^a \sum_{k=1}^n x_{ijk}}{an}, \quad \nonumber \\ & x_{ \bullet \bullet \bullet} =\frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \quad \tag{7.33} \end{align}$\S$7.3.2: The null hypothesis:$\quad \mu_{1 \bullet}=\mu_{2 \bullet}=\cdots =\mu_{a \bullet}=\mu_{\bullet \bullet }$

Now put, \begin{align} \Theta = {\mathbb R}^a \tag{7.34} \end{align}

define the system quantity $\pi_1 : \Omega (= {\mathbb R}^{ab} \times {\mathbb R}_+ ) \to \Theta (= {\mathbb R}^a)$ by

\begin{align} & {\small \Omega = {\mathbb R}^{ab } \times {\mathbb R}_+ \ni \omega =((\mu_{ij})_{i=1,2, \ldots, a,j=1,2, \ldots, b}, \sigma) \mapsto \pi_1(\omega) = (\alpha_i)_{i=1}^a (= ( \mu_{i \bullet} - \mu_{\bullet \bullet})_{i=1}^a ) \in \Theta = {\mathbb R}^a } \\ & \tag{7.35} \end{align}Define the null hypothesis $H_N ( \subseteq \Theta = {\mathbb R}^a)$ such that

\begin{align} H_N & = \{ (\alpha_1, \alpha_2, \ldots, \alpha_a) \in \Theta = {\mathbb R}^a \;:\; \alpha_1=\alpha_2= \ldots= \alpha_a= \alpha \} \tag{7.36} \\ & = \{ ( \overbrace{0, 0, \ldots, 0}^{a} ) \} \tag{7.37} \end{align}Here, "(7.36)$\Leftrightarrow$(7.37)" is derived from

\begin{align} a \alpha=\sum_{i=1}^a \alpha_i =\sum_{i=1}^a (\mu_{i \bullet} - \mu_{\bullet \bullet }) = \frac{\sum_{i=1}^a\sum_{j=1}^b \mu_{ij} }{b} - \sum_{i=1}^a \frac{\sum_{i=1}^a \sum_{j=1}^b \mu_{ij} }{ab} =0 \tag{7.38} \end{align}Also, define the estimator $E: X(={\mathbb R}^{abn}) \to \Theta(={\mathbb R}^{a} )$ by

\begin{align} E(x) = \Big(\frac{\sum_{j=1}^b\sum_{k=1}^n x_{ijk}}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \Big)_{i=1,2,\ldots, a } = \Big( x_{i \bullet \bullet } - x_{\bullet \bullet \bullet } \Big)_{i=1,2,\ldots, a } \tag{7.39} \end{align}Now we have the following problem:

where we assume that

\begin{align} \mu_{1 \bullet} = \mu_{2 \bullet} = \cdots=\mu_{a \bullet} = \mu_{\bullet \bullet } \end{align} that is, \begin{align} \pi_1 (\mu_1, \mu_2, \cdots, \mu_a )=(0,0, \cdots, 0) \end{align}namely, consider the null hypothesis { $H_N=\{ (0,0, \cdots, 0) \}$ $(\subseteq \Theta= {\mathbb R}^a ) )$}. Let $0 < \alpha \ll 1$.

Then, find the largest ${\widehat R}_{{H_N}}^{\alpha; \Theta}( \subseteq \Theta)$(independent of $\sigma$) such that

| $(B_1):$ | the probability that a measured value $x(\in{\mathbb R}^{abn} )$ obtained by $ {\mathsf M}_{L^\infty ({\mathbb R}^{ab} \times {\mathbb R}_+ )} ( {\mathsf O}_G^{{{abn}}} = (X(\equiv {\mathbb R}^{{{abn}}}), {\mathcal B}_{\mathbb R}^{{{abn}}}, {{{G}}^{{{abn}}}} ), S_{[(\mu=(\mu_{ij}\;|\; i=1,2,\cdots, a, j=1,2,\cdots,b ), \sigma )]} ) $ satisfies that \begin{align} E(x) \in {\widehat R}_{{H_N}}^{\alpha; \Theta} \end{align} is less than $\alpha$. |

Here,put

\begin{align} & \| \theta^{(1)}- \theta^{(2)} \|_\Theta = \sqrt{ \sum_{i=1}^a \Big(\theta_{i}^{(1)} - \theta_{i}^{(2)} \Big)^2 } \nonumber \\ & \qquad (\forall \theta^{(\ell)} =( \theta_1^{(i)}, \theta_2^{(\ell)}, \ldots, \theta_a^{(\ell)} ) \in {\mathbb R}^{a}, \; \ell=1,2 ) \nonumber \end{align}Motivated by Theorem 5.6 (Fisher's maximum likelihood method), define and calculate $\overline{\sigma}(x) \Big(= \sqrt{{\overline{SS}}(x)/(abn)} \Big)$ as follows.

\begin{align} & {\overline{SS}}(x)= {\overline{SS}}((x_{ijk})_{i=1,2, \ldots, a,\;\; j=1,2, \ldots, b,k=1,2, \ldots, n }) \nonumber \\ := & { \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} - x_{ij \bullet})^2 } = { \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} - \frac{\sum_{k=1}^n x_{ij k}}{n})^2 } \nonumber \\ = & { \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n ((x_{ijk}-\mu_{ij}) - \frac{\sum_{k=1}^n ( x_{i jk}-\mu_{ij})}{n})^2 } \nonumber \\ = & {\overline{SS}}(((x_{ijk}- \mu_{ij})_{i=1,2, \ldots, a,\;\; j=1,2, \ldots, b })_{k=1,2, \cdots, n}) \tag{7.40} \end{align}Define the semi-distance $d_\Theta^x$ ( in $\Theta = {\mathbb R}^a$) such that

\begin{align} & d_\Theta^x (\theta^{(1)}, \theta^{(2)}) = \frac{\|\theta^{(1)}- \theta^{(2)} \|_\Theta}{ \sqrt{{\overline{SS}}(x)} } \qquad (\forall \theta^{(1)}, \theta^{(2)} \in \Theta={\mathbb R}^{a}, \forall x \in X={\mathbb R}^{abn} ) \tag{7.41} \end{align}Define the estimator $E: X(={\mathbb R}^{abn}) \to \Theta(={\mathbb R}^{a} )$ such that

\begin{align} E(x) = \Big(\frac{\sum_{j=1}^b\sum_{k=1}^n x_{ijk}}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \Big)_{i=1,2,\ldots, a } = \Big( x_{i \bullet \bullet } - x_{\bullet \bullet \bullet } \Big)_{i=1,2,\ldots, a } \nonumber \end{align}Therefore, \begin{align} & \| E(x) - \pi (\omega )\|^2_\Theta \nonumber \\ = & || \Big(\frac{\sum_{j=1}^b\sum_{k=1}^n x_{ijk}}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \Big)_{i=1,2,\ldots, a } - \Big( \alpha_i \Big)_{i=1,2, \ldots, a } ||_\Theta^2 \nonumber \\ = & {\small || \Big(\frac{\sum_{j=1}^b\sum_{k=1}^n x_{ijk}}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \Big)_{i=1,2,\ldots, a } - \Big( \frac{\sum_{j=1}^b \mu_{ij} }{b} - \frac{\sum_{i=1}^a \sum_{j=1}^b \mu_{ij} }{ab} \Big)_{i=1,2, \ldots, a } ||_\Theta^2 } \nonumber \\ = & || \Big( \frac{\sum_{k=1}^n \sum_{j=1}^b(x_{ijk}-\mu_{ij})}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk}-\mu_{ij})}{abn} \Big)_{i=1,2, \ldots, a } ||_\Theta^2 \nonumber \end{align}

and thus, if the null hypothesis $H_N$ is assumed (i.e., $\mu_{i \cdot} -\mu_{\cdot \cdot} =\alpha_i=0$ $(\forall i=1,2,\ldots, a )$ )

\begin{align} = & || \Big( \frac{\sum_{k=1}^n \sum_{j=1}^bx_{ijk}}{bn} - \frac{\sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n x_{ijk}}{abn} \Big)_{i=1,2, \ldots, a } ||_\Theta^2 = \sum_{i=1}^a(x_{ij \bullet} - x_{\bullet \bullet \bullet})^2 \\ & \tag{7.42} \end{align}Thus, for any $ \omega=(\mu_1, \mu_2 ) (\in \Omega= {\mathbb R} \times {\mathbb R} )$, define the positive number $\eta^\alpha_{\omega}$ $(> 0)$ such that:

\begin{align} \eta^\alpha_{\omega} = \inf \{ \eta > 0: [G(E^{-1} ( {{ Ball}^C_{d_\Theta^{x}}}(\pi(\omega) ; \eta))](\omega ) \ge \alpha \} \tag{7.43} \end{align}Assume the null hypothesis $H_N$. Now let us calculate the $\eta^\alpha_{\omega}$ as follows:

\begin{align} & E^{-1}({{ Ball}^C_{d_\Theta^{x} }}(\pi(\omega) ; \eta )) =\{ x \in X = {\mathbb R}^{abn} \;:\; d_\Theta^x (E(x), \pi(\omega )) > \eta \} \nonumber \\ = & \{ x \in X = {\mathbb R}^{abn} \;:\; \frac{ abn \sum_{i=1}^a \sum_{j=1}^b( x_{ij \bullet} - x_{\bullet \bullet \bullet} )^2}{ \sum_{i=1}^a \sum_{j=1}^b\sum_{k=1}^n (x_{ijk} - x_{ij \bullet})^2 } > \eta \} \tag{7.44} \end{align}That is, for any $\omega =((\mu_{ij})_{i=1,2,\ldots,a, \;j=1,2,\ldots,b},\;, \sigma) \in \Omega$ such that $\pi( \omega ) (= (\alpha_1, \alpha_2, \ldots, \alpha_a) )\in H_N (=\{0,0, \ldots, 0)\})$,

\begin{align} & [{{{G}}}^{abn} ( E^{-1}({{ Ball}^C_{d_\Theta^{x} }}(\pi(\omega) ; \eta )) ) (\omega) \nonumber \\ = & {\small \frac{1} {({ {\sqrt{2 \pi } \sigma} })^{abn}} \underset{ E^{-1}({{ Ball}^C_{d_\Theta^{x} }}(\pi(\omega) ; \eta )) } {\int \cdots \int} \exp[- \frac{ \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} - \mu_{ij} )^2 }{2 \sigma^2} ] \times_{k=1}^n \times_{j=1}^b \times_{i=1}^a d{x_{ijk} } } \nonumber \\ = & {\small \frac{1} {({ {\sqrt{2 \pi } \sigma} })^{abn}} \underset{ \frac{ abn \sum_{i=1}^a \sum_{j=1}^b( x_{ij \bullet} - x_{\bullet \bullet \bullet} )^2}{ \sum_{i=1}^a \sum_{j=1}^b\sum_{k=1}^n (x_{ijk} - x_{ij \bullet})^2 } > \eta^2} {\int \cdots \int} \exp[- \frac{ \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} - \mu_{ij} )^2 }{2 \sigma^2} ] \times_{k=1}^n \times_{j=1}^b \times_{i=1}^a d{x_{ijk} } } \nonumber \\ = & {\small \frac{1} {({ {\sqrt{2 \pi }} })^{abn}} \underset{ \frac{ \frac{ \sum_{i=1}^a \sum_{j=1}^b( x_{ij \bullet} - x_{\bullet \bullet \bullet} )^2)}{(a-1)} }{ \frac{ \sum_{i=1}^a \sum_{j=1}^b\sum_{k=1}^n (x_{ijk} - x_{ij \bullet})^2 }{ab(n-1)} } > \frac{\eta^2 (ab(n-1))}{abn(a-1)} } {\int \cdots \int} \exp[- \frac{ \sum_{i=1}^a \sum_{j=1}^b \sum_{k=1}^n (x_{ijk} )^2 }{2 } ] \times_{k=1}^n \times_{j=1}^b \times_{i=1}^a d{x_{ijk} } } \\ & \tag{7.45} \end{align}

| $(C_2):$ | by the formula of Gauss integrals ( Formula 7.8(C)( in $\S$7.4)), we finally get as follows. |

where $p_{(a-1,ab(n-1)) }^F$ is the $F$-distribution with $(a-1,ab(n-1)) $ degrees of freedom. Thus, it suffices to calculate the $\alpha$-point $F_{ab(n-1), \alpha}^{a-1}$ Thus, we see

\begin{align} (\eta^\alpha_{\omega})^2 = F_{ab(n-1), \alpha}^{a-1} \cdot n(a-1)/(n-1) \tag{7.47} \end{align}Therefore, we get ${\widehat R}_{\widehat{x}}^{\alpha; \Theta}$ (or, ${\widehat R}_{\widehat{x}}^{\alpha; X}$; the $(\alpha)$-rejection region of $H_N =\{(0.0. \ldots, 0)\}( \subseteq \Theta= {\mathbb R}^a)$ ) as follows:

\begin{align} {\widehat R}_{{H_N}}^{\alpha; \Theta} & {\small = \bigcap_{\omega =((\mu_i)_{i=1}^a, \sigma ) \in \Omega (={\mathbb R}^a \times {\mathbb R}_+ ) \mbox{ such that } \pi(\omega)= (\alpha_i)_{i=1}^a \in {H_N}=\{(0,0,\ldots,0)\}} \{ E({x}) (\in \Theta) : d_\Theta^{x} (E({x}), \pi(\omega)) \ge \eta^\alpha_{\omega } \} } \nonumber \\ & = \{ E({x}) (\in \Theta) : \frac{ (\sum_{i=1}^a \sum_{j=1}^b( x_{ij \cdot} - x_{\cdot \cdot \cdot} )^2)/(a-1)}{ (\sum_{i=1}^a \sum_{j=1}^b\sum_{k=1}^n (x_{ijk} - x_{ij \cdot})^2) /(ab(n-1)) } \ge F_{ab(n-1), \alpha}^{a-1} \} \\ & \tag{7.48} \end{align}Thus,

\begin{align} & {\small {\widehat R}_{{H_N}}^{\alpha; X}= E^{-1}({\widehat R}_{{H_N}}^{\alpha; \Theta}) = \{ x (\in X) : \frac{ (\sum_{i=1}^a \sum_{j=1}^b( x_{ij \cdot} - x_{\cdot \cdot \cdot} )^2)/(a-1)}{ (\sum_{i=1}^a \sum_{j=1}^b\sum_{k=1}^n (x_{ijk} - x_{ij \cdot})^2) /(ab(n-1)) } \ge F_{ab(n-1), \alpha}^{a-1} \} } \\ & \tag{7.49} \end{align}

| $\fbox{Note 7.4}$ | It should be noted that the mathematical part is only the (C$_2$). The derivation of (7.46) from (7.45) is not easy but only the mathematical problem. |